API3 Core Technical Team Reports

API3 DAO Development Update, Operations Cycle #1

This post accounts for the technical development activities during the first operations cycle from December 17th, 2020 to January 31st, 2021.

Airnode

This was an extremely exciting cycle for us, as we deployed a fully-functional Airnode on the cloud. If you want to find out what that is like, you can test it on a number of chains with our starter project. We have also made integrations with a number of smart contract platforms, and even published a guide to “self-integrate”, as the demand is difficult to keep up with.

In addition to complete unit test coverage, an end-to-end test suite was implemented in this cycle and populated with fundamental test scenarios. The work on various API integration tooling (validator, OAS to OIS converter, etc.) is ongoing. We’ve also been exploring and analyzing various gas price strategies, and how they work with the unique way the Airnode fulfills requests.

Authoritative DAO

We had provided dOrg with a prototype of the pool contract with significant scaling and UX issues that were going to be ironed out according to the specs delivered at the end of undertaking #1. We have determined that it was suspect that this was going to be achieved in a timely manner due to the pace of undertaking #2. Furthermore, the resulting ambiguity in the user flow was blocking the finalization of the frontend design, and consequently its development.

As a solution, we deployed significant development and design resources from our core team to refine the specs in a way that satisfies the business needs, is easy to implement, and gas-cheap and easy to use for the user. Although this distracted the core team, it was worth it because the project is back on track and is expected to follow a timeline comparable to the original.

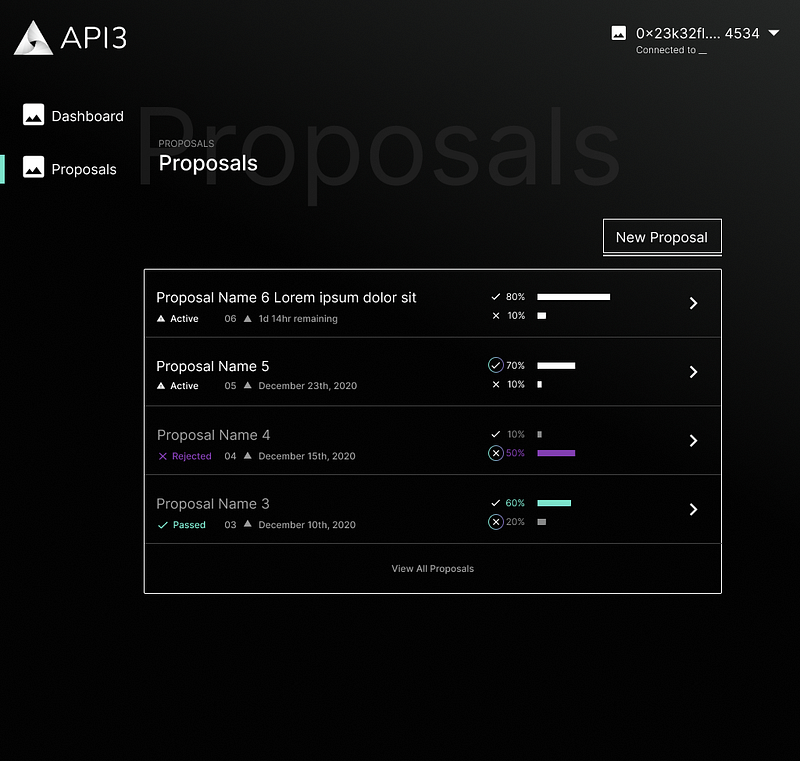

A DAO dashboard mock up. We are targeting an MVP in terms of the dashboard to ensure that the monolith is not being encumbered by redundant features.

To prepare for the undertaking #3, we are in discussions with a number of companies for the security audit of the DAO contracts. Here, our goal will be to find options that deliver high quality audits that will be able to accomodate our timeline.

ChainAPI

We have announced that we will start working on ChainAPI this cycle, and it’s probably apt to give an update. We’re pleased to say that the development has quickly hit its stride, and we are working on the integration platform undertaking, while laying the groundwork for the following undertakings as well.

Scaling the team

We have made a total of 5 technical hires this cycle that will work on API3 business full-time. As the technical team, we are faced with two options: We either keep building full speed ahead, or we work on scaling up for a greater long term potential. The inherent potential of the project, as well as the interest from the public and potential users have directed us towards the latter. Therefore, recruitment was one of the major things that we have worked on in this cycle, and it looks like this will continue for a while.

In addition to looking for employees, we have started mobilizing some of the founding teams (ChainAPI, Curve Labs, Curvegrid) to contribute to various development and integration efforts, and we expect these contributions to pick up speed in the following months. We are also exploring outsourcing opportunities; dOrg is already working on the authoritative DAO implementation, and we have recently started working with LimeChain on the protocol contracts.

As a note, we have a lot of technical community members on our Discord, enthusiastic to learn and contribute. Unfortunately, reaching out to each of you personally is not possible at this stage, but we are working on a model that will harness this potential. In the meantime, please be patient with us, read the docs, and tinker with the example projects. If you don’t feel like being patient, find a few like-minded peers and come up with a project!

Conclusion

What we have cared about the most in this cycle was to keep the authoritative DAO undertaking moving along, and we have succeeded at this. While doing so, we were able to make significant progress with Airnode, make a decent number of hires, and kick off multiple projects with internal and external teams. These efforts are greatly increasing our momentum, and will have spillover effects in the following cycles.

API3 DAO Development Update, February 2021

We were initially planning to publish a development update per-operations cycle (see the one for the first cycle here). However, we already have a lot of material built up, so a monthly schedule will serve us better at this point. Without further ado, let’s begin!

Airnode

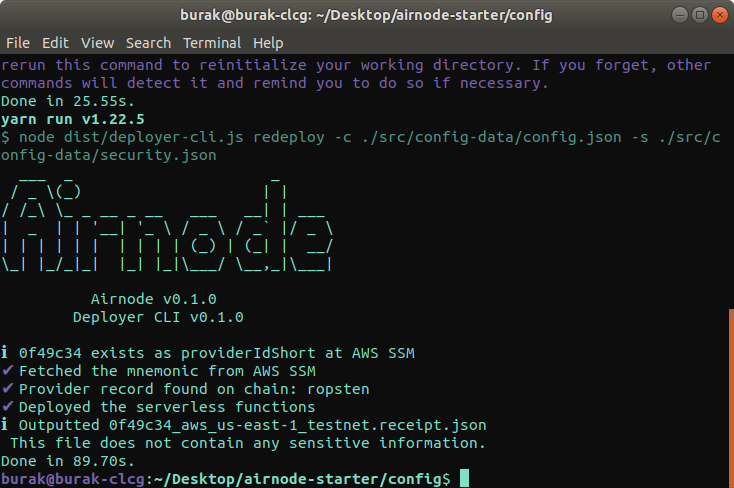

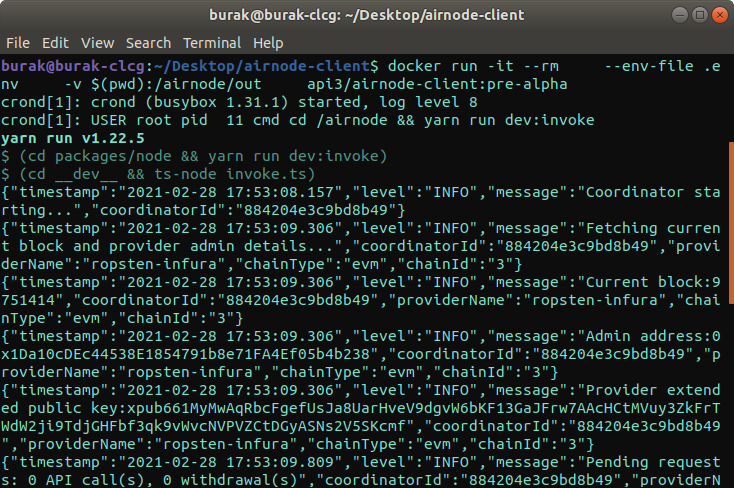

We are planning to apply some finishing touches to Airnode and its protocol before releasing our first stable version, v0.1.0 (this would be the Airnode-alpha monolith on our roadmap). However, the existing version is feature-complete and is quite robust (based on internal and external usage on a variety of chains). Because of this, we decided to freeze this version under the name pre-alpha, and are currently using it to prototype API, dApp, and smart contract platform integrations. If you want to use Airnode before the release, make sure that you’re using the pre-alpha version. The changes between pre-alpha and v0.1.0 will be functionally minor, which means that the transition will be easy.

We strongly advocate for serverless hosting as the ideal solution for oracle nodes due to a variety of reasons such as failure resistance, easy redundancy, on-demand pricing, etc. Of course, the ideal workflow is to implement the integration while running your Airnode locally, then deploy it using the deployer once you are happy with the results. While this was available from the start, it was buried in an obscure README.md file in the monorepo. We have now containerized the node too (the previous container was the deployer), which people can easily use to run an Airnode locally and read the node logs on their terminal. Although this is intended for development and debugging, we will be maintaining this container to be usable in production for hosting on premise or cloud providers that the Airnode deployer will not support (for example Digital Ocean, which doesn’t provide native serverless functionality). Since this configuration will share the same stateless architecture, we are expecting it to be similarly robust.

I quickly spun up a clone of the airnode-starter node running locally on my machine for a screenshot. Note that this and the serverless deployment will work in tandem without stepping on each other’s toes, which shows how easy it is to add redundancy to Airnode across different hosting solutions.

During this month, we have worked with LimeChain to have the pre-alpha version of the request–response protocol contracts audited. We are pleased to announce that it was confirmed that the contracts did not include any vulnerabilities. We will continue using this version for our early integrations, while scheduling a more comprehensive audit for v0.1.0. This was also planned to be the first step of further cooperation with LimeChain on building solutions based on the Airnode protocol contracts, which you will hear more about in the future.

Airnode has a novel way of broadcasting fulfillment transactions that is very responsive to the status of the current gas price market. Furthermore, the requester covering the gas costs and the requests being fulfilled by requester-specific node wallets present some unique solutions to the cost–performance tradeoff problem that is common with oracles. This month, we have done statistical analysis, live testing, and further statistical analysis based on the results of the tests to come up with a final gas price strategy that can be optimized for the specific use-case.

Authoritative DAO

We’re happy to say that we’re on the final stretch with the authoritative DAO. The first audit is scheduled with Solidified for March 8–22. Following the revisions, a second audit is scheduled with Quantstamp for April 4–9. dOrg will be finalizing the dashboard implementation in the meantime, and barring an unforeseeable event, we are planning for the authoritative DAO to be live before April is out. Note that this is also when staking will go live, and we will be publishing posts, going over the tokenomic design and the related design decisions ramping up to that.

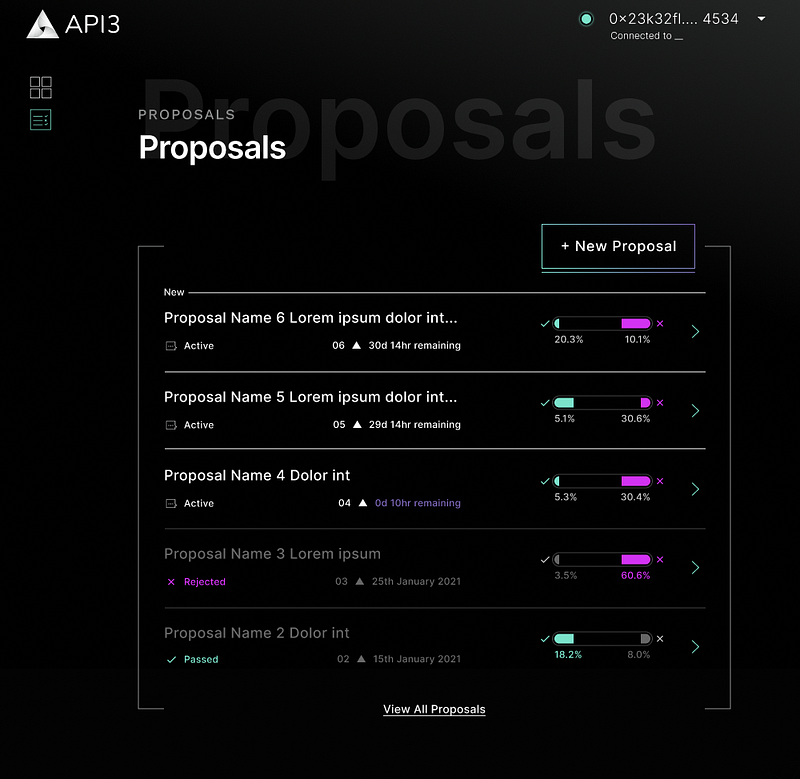

At the design side, the DAO dashboard UX design has been finalized (some mechanics were previously ambiguous). In the meantime, we have had the graphic design polished, and are hoping to have this version implemented before we go live.

The revised graphic design of the dashboard.

Documentation

We are not only building a node for first-party oracles with Airnode, but also the protocol to integrate Web APIs to smart contract platforms. Since we are planning Airnode to become a very fundamental building block for smart contract use-cases that require secure, middleman-free data (which is quite a large audience), high quality documentation will be critical.

We have some special requirements:

API3 interacts with a number of stakeholders (API providers, dApps, developers, DAO members, smart contract platforms, etc.) and the documentation requires a specialized facet for each of them.

Our protocol has detailed specification formats both for integrating APIs and configuring Airnode. Since not all users will be on the same version, we need good versioning support at the documentation-side.

Since API3 is a decentrally governed, open source project, we need the documentation tooling to fit that. This requires qualities such as easy local development, traditional git workflow and not depending on proprietary/paid services and frameworks.

After some shopping around and prototyping, we have decided that VuePress satisfies our needs after some customization. We are currently migrating the existing docs to the new format, here’s a sneak peek.

Conclusion

Actually, a lot more is going on, yet these developments are not at a stage where they can be showcased (especially visually), so we would rather not spoil them. One final thing: We have updated our open positions page on the website. Please take a look and make sure to share it within your network. See you in the next post!

Andrea Marcias, Pyramid Altar.

API3 DAO Development Report, March 2021

To summarize last month’s development report, you currently can use the pre-alpha version of Airnode to integrate an API to a smart contract (see the related monorepo branch and docs).

This version of Airnode can be operated as:

- An AWS serverless function

- A Docker container (on cloud or locally)

The pre-alpha contracts have been audited by a third party, and this version is being used to prototype integrations. We are working on a v0.1.0, which functionally will be exactly the same, yet have a stable (read: unchanging) protocol and UX flow based on the feedback from the pre-alpha.

Roadmap

In the most recent community call, there was a question about whether the roadmap is flexible. If you have referred to the roadmap more than a few times, you probably have noticed that monoliths and undertakings move around. This reflects the fluidity of the roadmap, which is why we prefer to use a board instead of a static image or webpage. Such changes tend to be based on new data, and what we have found out is that not only first-party oracle-based dAPIs, but also stand-alone first-party oracles are very much in demand (and more importantly, in a way that cannot be substituted by third-party oracles in any feasible way).

In this paradigm, quantifiable security through insurance is the focus, and dAPIs are a tool to be used to maximize the amount of insurance that can be provided, which is redundant for a lot of untapped use cases. In other words, the dAPI concept is a tool to be able to provide a specific (even niche) level of security, and not the goal. Being able to purchase first-party oracle access and insurance is what creates the demand for the API3 token, so focusing on this aspect (instead of dAPIs specifically) will align the direction of the project with its tokenomics more directly and at a wider scale. In short, the roadmap is indeed fluid, and is coupled with the strategic direction at an abstract level.

Authorizers

Let’s start with a related topic: The specifications for the authorizer contracts are established, followed by their implementation. To briefly explain what an authorizer contract is, it’s used to extend the Airnode request–response protocol to allow an Airnode operator (i.e., API provider) to manage access to their first-party oracle based on a customized policy.

We strive to design a flexible framework that will cover all potential use-cases, as we want to build the standard to integrate APIs to smart contracts. On the other hand, we’re not a fan of under-defining solutions under the guise of “giving the user freedom”, because that is often used as an excuse to dodge the more difficult problems (how do you decentralize oracle governance, how do you quantify security, how do you specify integrations, etc.). The solution is to implement the customizable authorization framework mentioned above, but also provide the user with ready-made authorizer contracts.

Authorization is about managing access, and access management is the primary tool for monetizing a service. This is why establishing specs for these ready-made authorizer contracts is difficult; it’s not a purely technical matter — nothing about oracles ever is — and requires strong assumptions about monetization both for API providers and the API3 DAO. Furthermore, one needs to consider the UX implications; if you need oracle node operators to reconfigure their node or make a transaction each time a new use-case will use their services, your solution will not scale.

Considering all these factors, we came up with two authorizer contracts: Api3Authorizer.sol allows one’s Airnode to be accessible with the usage of API3 tokens without requiring any API provider interaction (more details about this will be announced later on). This is the primary authorizer contract that will be utilized by the API3 partners the majority of the time. SelfAuthorizer.sol allows the API provider to whitelist users themselves based on any criteria they want, and ChainAPI (with its requester interface undertaking) will include an interface (essentially, a dApp) that will allow the API provider to do this (note that this will simply be an interface, the API provider can also do this by interacting with the authorizer contract directly). An Airnode operator is free to use a combination of these authorizers plus ones that they may have implemented to enforce more complex authorization policies that these ready-made contracts may not support.

Airnode developments

Work on dAPI contracts has started. The initial goal is to have a typical aggregating contract that will be integrated with the request–response protocol, with more novel features to be delivered in the future.

The new deployment file specs and flow for v0.1.0 is established and are currently being implemented. This will support configurations that utilize multiple simultaneous deployments across cloud providers as first-class citizens, which will result in unmatchable resiliency.

The OIS validator package is updated according to these new specs, and will go into use with v0.1.0.

Docs

We have moved our docs to the api3.org navigation bar because it has reached a stable point. It has a lot of customized components such as a version selector (that only lists pre-alpha at the moment) and an extra table of contents on the right that makes navigation easy. The docs starting from v0.1.0 will be divided into sections according to roles. At the moment, a documentation page targeted at the API3 DAO members (hopefully you!) is being worked on.

Authoritative DAO & Staking

In the last month’s report, we had left off where we were preparing for the first DAO audit on March 8th. I’m happy to say that we’ve received an approving report on March 22nd. The suggestions are largely addressed at the moment (with the addition of some significant gas cost optimization) and we’re doing our final tweaks before sending it off for the final stamp of approval. This will probably coincide with the start of our second audit on April 4th mentioned in the last month’s report.

The beginning of the first audit also marks Curve Labs, one of the API3 founding teams, starting to play a much more active role in our DAO development. You can see their first proposal here, which covers the phases starting from the audit to the launch of the authoritative DAO. What is exciting about this development is that as mentioned in their proposal, they are planning to take ownership of the long-term development of the authoritative DAO and its extensions, which was something that was very much needed.

I’ll end this with some very significant news that may have gone under your radar. AWS has announced that they now provide managed Ethereum nodes for public chains through Amazon Managed Blockchain. We knew that this was in the works as early as last Summer based on our communications and were designing the Airnode architecture accordingly, but it being delivered this early accelerated the timeline for API3. The implications are two-fold:

On the practical side, a managed Ethereum node is the perfect complement to Airnode, the managed oracle node. As an alternative to using centralized service providers like Infura and decentralized service providers like Pocket Network, this will allow an API provider to run their own Ethereum node with no effort. Since Airnode is already run on the cloud as a serverless function for maximum availability, operating the Ethereum node on the same platform doesn’t degrade security (and is even preferable).

More importantly, public blockchains are starting to become a part of the cloud stack in the form of managed services, and we can expect this to be followed by integrations with more chains and cloud providers. This is bad news for the middleman node operator and solutions that depend on it as their moat, yet is perfectly in line with our vision for Airnode as the API gateway for blockchains, and positions API3 as the provider of this piece of technology.

API3 DAO Development Report, April 2021

This is the final development report for cycle #2, following the February and March reports. See the DAO & Staking Part I post for the state of the development on that front. I’ll be keeping this report brief and factual and focus on the rest of the DAO & Staking posts as there is a lot of material that needs to be covered.

Airnode

In the March report, I had mentioned that the new Airnode deployment file specs and flow had been established that will support multiple simultaneous deployments across different cloud providers and regions. This month, the deployer package is updated to implement these. In addition, the RRP admin CLI is updated and a lot of additional minor updates were made towards the v0.1.0 release. (A note about the “v0.1.0 when” questions, pre-alpha is doing the job well for prototyping and there is no particular reason to rush the release.)

It has always been the plan for the Airnode deployer to be migrated to use bare Terraform (instead of Serverless Framework), as that would provide the optimal flexibility regarding using additional cloud infrastructure (VPCs, managed Ethereum nodes, etc.) and different cloud providers, yet it was approached as more of a long term plan. This month, we had additions to the team that could make that a reality much sooner. Therefore, this is now on the menu for v0.1.0.

Authoritative DAO

The Aragon framework uses MiniMe to implement governance tokens by default. The authoritative DAO was implemented with a similar approach. In one of the audit reports, it was (rightly) suggested that this should be replaced because its access methods (based on binary search) require a variable amount of gas, which may end up being a problem. As a solution, we replaced all instances of these access methods with workarounds with deterministic bounds for gas costs, both defusing the risk and optimizing the gas costs.

Unrelated to the audits, we updated the DAO structure so that there are two levels of quorum that need to be reached to enact different types of proposals. This will allow us to better secure critical functionality such as API3 token minting rights management (by having it require a higher quorum threshold than day-to-day proposals), and will be explained in detail in one of the upcoming DAO & Staking posts. The DAO contracts are still undergoing audits at the moment.

Open Bank Project

The big news of this month was the announcement of our long term development partnership with the Open Bank Project. As implied by the lifetime of the partnership, there is a long road ahead to solve the API connectivity problem for the banks, and there is no better reason to start working on it right away. This month, a combination of the core technical team and the integration people of the API BD team started familiarizing ourselves with the OBP solutions. In addition, we started having joint calls with OBP for exploration and to plan the following development steps.

dAPIs

This month, we have conceptualized the RRP-based dAPI architecture, which ended up elegantly mirroring the Airnode RRP architecture (i.e., calling a dAPI or a single Airnode looks and feels rather similar to the user). Alternative reduction methods have been implemented in a very scalable way, both complexity and gas cost-wise. We are currently implementing a dAPI server contract that will use these reduction methods to create and serve dAPIs in a permissionless way.

Keep tuned-in for the DAO & Staking series posts as they will dive into the implementation details, which you should definitely be aware of if you are intending to stake and participate in governance.

API3 DAO Core Technical Team Development Report, May 2021

We’re at the end of the first month of our third cycle. This month has been a particularly productive one regarding DAO development, which has also interrupted the DAO & Staking series (1, 2, 3). Although I’m planning to finish the series to serve as a definitive reference for the motivation of the authoritative DAO implementation details, this may end up happening after the authoritative DAO launch.

In the previous month’s report, I had mentioned that replacing the Serverless Framework dependency at the Airnode deployer in favor of Terraform could have been feasible. This will allow us to port Airnode to other cloud providers and extend it with additional cloud infrastructure (or provide it as a module to be added to existing infrastructure) a lot more easily. I’m glad to say that this is already achieved this month, which is quite a significant improvement.

The work on dAPI contracts mentioned in the previous month’s report was continued. Considering that dAPIs are a fairly isolated vertical in terms of implementation and operation, a dAPI team separate from the core technical team is being considered. Including having a separate DAO development team and the integration work being undertaken by the business development team members, this allows the core technical team to focus more of its efforts on Airnode, which should improve our efficiency.

The core technical team had to be very involved with DAO development this month, and I’m planning to post a report about the entire development process once we’re done. We had been conducting an additional audit with Team Omega (an auditing team composed of senior DAOstack developers) while waiting on the initial Quantstamp report, which allowed us to use this time efficiently. The audits from Solidified, Quantstamp and Team Omega ended up providing a broad coverage from different perspectives, and will result in a much more secure product.

We received the initial audit report from Quantstamp on May 21st, and the revisions were sent back on May 30th. While waiting for the final report Quantstamp, we will be preparing the revisions for the Team Omega audit. In parallel to this, we are finishing up on the implementation of the front-end and applying the audit-related updates. Overall, strong core technical team engagement resulted in the DAO development speed picking up significantly. This came at the price of slowing down the development of the core solutions, yet this is only temporary and the authoritative DAO is deemed important enough to warrant this.

API3 DAO Core Technical Team Development Report, June 2021

In the previous month’s report, it was mentioned that we were awaiting the final audit reports for the DAO contracts. We’re happy to announce that all three audits from Solidified, Quantstamp and Team Omega are now finalized. This ended up being a usefully diverse combination, where Solidified gave an initial vote of confidence, Quantstamp provided a broad coverage, and Team Omega was much more DAO/governance-focused and went even beyond the scope of a regular security audit

We have achieved a feature-complete version of the DAO dashboard this month, which supports three main user flows:

- The user doesn’t have time to keep up to date with governance or they don’t want to vote actively. Then, the user stakes and delegates their voting power, and continually receives staking rewards without additional interaction.

- In addition to staking and receiving rewards, the user actively participates in governance by voting on proposals.

- The user is a contributor to the project and makes DAO proposals, for example to fund their efforts.

The DAO dashboard is hosted on IPFS and interacts with the DAO contracts directly, without depending on any intermediary services (in contrast to dApps depending heavily on caching solutions for a more Web 2.0-like user experience). This makes it fully decentralized and operationally robust. The resulting DAO is a very suitable template for subDAOs, as it will be able to scale in numbers easily due to not having to be maintained in any way (perhaps other than making sure that the dashboard is kept pinned on IPFS, which can trivially done in a completely trustless way through a variety of services).

The completion of the dashboard was followed by a closed test and user interviews with subjects chosen from the community. After applying updates based on this feedback, we started a public test on the Rinkeby testnet on June 28, which you can participate in right now. See the #public-dao-test channel on our Discord for more information. We are currently focused on investigating any issues faced by the users and applying updates to improve the UX.

It has been a hectic last two months where a group composed of core technical team and ChainAPI developers took over the authoritative DAO development and worked very hard to deliver a high quality product, so I believe a credit roll is appropriate here. Emanuel and Andre worked on the frontend, Michal did our styling, Tamara designed the wireframes and Leandro did our graphical design. I should also include Ashar and Santiago for their contributions by testing, reviewing and documenting the contracts. They all did a fantastic job and I thank them here on behalf of the community. If you want to learn more about the development process of the DAO, and specifically why it ended up being finished later than expected, you can read my recent post here.

Of course, the rest of the team was making progress on their own endeavors in the meantime. Our docs are being built up constantly, we are supporting the BD team with technical help in Sovrynthon, working on a new contract that will have the API3 token gain a new utility that is not mentioned in the whitepaper and the ChainAPI development is continuing, steady as ever. However, the spotlight should definitely be on the public DAO test, and we’ll be back with a more oracle-focused development report next month!

Bruno Liljefors, Havsörnar jagande en ejder, 1924.

API3 Core Technical Team Report, July 2021

In the June report, we have announced that the public DAO test started on June 28. After a fast-paced and fruitful test process (which we again thank the participants for), we announced the mainnet launch, which was executed successfully on July 15. This has been the most financially ambitious achievement of the project, and surpassed the public token distribution in its scale. This is the second project-scale milestone that the core technical team has achieved after the delivery of the pre-alpha version of Airnode, and we see it to be our mission to deliver similarly long-running and mission-critical projects.

To give some context for governance purposes, I’ve published an article, recounting the unanticipated development process of the authoritative DAO. The relevance of that to this report is that the core technical team was only responsible for “overseeing and supporting” the development of the DAO contracts and dashboard, yet we stepped up to undertake the entire development and operational tasks when it ended up becoming a necessity. To finalize the project in a timely manner, we had to deploy a total of five senior developers (three full-time, two part-time) and two designers from the core technical team and the ChainAPI team (distributed approximately 50–50 between the two teams).

This has two implications:

- The core technical team is willing to get the DAO out of trouble at the cost of diverging from its own plans. Furthermore, it’s quite successful at this. (Good intentions by themselves do more harm than good, taking over the project only to fail would have been disastrous.)

- The only reason we succeeded at this was that we had a number of senior developers across the core technical team and the ChainAPI team that could easily adapt to a project that they weren’t selected for in the first place, and we had the authority to make this call (i.e., “We will stop what we’re doing and will now take over this task that someone else was supposed to do.”)

Performing well when everyone else is doing their jobs perfectly is not a feat, it’s the bare minimum. Through this affair, the core technical team has proven that it can be trusted with turning funds into a general kind of problem solving capacity, which allows it to turn unexpected problems into wins.

It has been more than a year since we started working on Airnode, which is what makes the value proposition of API3 feasible. That’s because if you propose first-party oracles as a solution to the ecosystem building problem around oracles, you first need to answer the question of why we don’t already have first-party oracles and how that will be possible going forwards. We released the pre-alpha version of Airnode towards the end of 2020, aiming to test it in real world conditions and iterate on it so that we have a much stronger foundation to build on. During this time, we received a lot of confirmation that it makes a lot of sense to the stakeholders by design and performs very reliably, but we also discovered some friction points that seem to consistently cause UX and business problems. In a similar way, some of the nice-to-haves that we have implemented at the cost of increasing complexity turned out to be not necessary at all.

During this month, it became clear that there is immediate demand for a future-proof (read: that won’t get breaking updates) and production-ready Airnode with all the new features that we have implemented since the release of the pre-alpha version to address the issues mentioned above. As a result, we started working on Airnode Beta (a working title) this month. We have greatly revised the protocol to simplify the UX and extended it to implement the monetization features that were omitted in the pre-alpha version. Following its audit and the implementation of these changes at the node-end, we’re planning to start using this internally with our partners and release it for the public to use to integrate APIs to smart contracts.

Şahkulu, “saz”-style illuminated manuscript, mid-16th century.

API3 Core Technical Team Report, August 2021

We launched the DAO in July, and have been maintaining it since:

- Fixed a frontend regression that broke the radio buttons

- Redirected dao.api3.org to the DAO dashboard through an IPFS gateway as an alternative to api3.eth.limo because it is not reliable due to the distributed DNS infrastructure it relies on (api3.eth.limo is still the recommended URL)

- Fixed a number of issues, most notably one that caused unstable WalletConnect experience

The operations proposals went by smoothly, with no technical issues. Furthermore, the feedback on the staking flow is overwhelmingly positive (apart from the connectivity issues addressed above), which validates the design and implementation. Currently, we have a list of nice-to-have features that we want to implement, yet they do not hold top priority on our to do list.

7 weeks after the DAO launch, the DAO has met the staking target of 50% of the total supply. Currently, the staking reward started decreasing slowly, while the staked amount still sits slightly above the target. The main caveat with such dynamic systems is that if they are not initialized well, it will take very long for them to stabilize. The current behavior indicates that the initial values for the staking reward parameters were estimated well, and it should be smooth sailing around the staking target going forwards.

The fact that the staked amount is increasing slowly despite the reward decreasing slowly can be attributed to the estimated smart contract risk decreasing more significantly, and accordingly, more people finding staking API3 to be a good deal. In a similar vein, DAOv1 started migrating her funds to the authoritative DAO. At the moment, the primary treasury holds 10 million API3, while the secondary treasury holds more than 3 million USDC (a proposal requires 50% quorum to use the funds from the primary treasury, and 15% quorum to use the funds from the secondary treasury). In the absence of incidents, the gradual migration will continue.

The DAO dashboard is very minimalist in what it does to provide the following:

- A very simple user experience, as it is meant for all token holders

- Being able to run it on fully decentralized infrastructure that can survive abandonment (in a DAO context, the bus factor also applies at the team scale)

- Being able to run it on chains other than Ethereum mainnet (where certain dependencies in the form of decentralized protocols may not exist)

However, we were quickly let known that this wasn’t going to satisfy our power users. As we were planning to enrich the dashboard with a few additional features, Enormous posted the DAO Tracker on the forum. I recommend you to click around if you haven’t already, but in summary, it’s very good and makes any work by us towards the same end redundant.

The DAO Tracker contributes to API3 in a couple ways. We recently got two additional technical teams, yet this is one other that popped up in a permissionless way and managed to make a significant contribution without any coordination with the existing teams. This demonstrates that the scope of API3 is large enough that one can imagine an ecosystem of independent actors working on it and pushing it forwards. Another point is that the DAO Tracker is potentially a critical component of API3 governance, like the Telegram group, Discord, Twitter handle, DAO dashboard, representation at community calls, conferences and interviews, etc. It’s healthy for such channels to not be consolidated at the hands of a single team, and this was one of the reasons why we didn’t want the DAO dashboard to be a web application with a traditional backend (that the core technical team controlled). From this regard, the DAO Tracker being hosted by an entity independent from our team is desirable.

In last month’s report, I mentioned that we’re back to focusing our attention on Airnode. We made a lot of progress this month, but we have yet to reach a milestone that would be appropriate to make an announcement at. In parallel to this work, we are overhauling our project management and release management strategy, which should increase the transparency of the state of development (essentially act as a team-scale roadmap), allow Airnode users to track when we will support certain features and help developers find out where their open source contribution would be most effective.

Popamania, album art.

API3 Core Technical Team Report, September 2021

We host our DAO dashboard on IPFS. There are a few ways to reach it:

- You can connect your Metamask to Ethereum mainnet and go to api3.eth/ This looks up the IPFS CID from the ENS contract and uses a public IPFS gateway to take you to the dashboard.

- You can go to https://api3.eth.limo.

- You can go to https://dao.api3.org to be redirected by api3.org to the public IPFS gateway.

In addition to some not always being available, all of these options require you to trust third parties. As a trustless alternative, we now provide instructions for building and running the DAO dashboard locally. In addition to being more secure, this method will always be available despite potential IPFS infrastructure outages.

In the July report, we have mentioned that demand for a new Airnode release was building up, and after the DAO launch — which was most imperative — we finally had the opportunity to focus on it. We responded in three main ways: (1) Design a project management and release management flow to support rolling releases (mentioned in the August report), (2) start working towards a fast initial release, which can then be iterated on, (3) finalize the request–response protocol and have it audited.

We have made a lot of progress on all three of these items, but I will focus on (3) specifically. We have released the pre-alpha version at the start of this year both as a POC to demonstrate that we can feasibly achieve a large number of first-party oracles, and also to collect feedback from internal and external stakeholders. The contracts of this version were audited, and additional review and usage did not uncover additional issues. Therefore, despite its name, the pre-alpha version is actually quite solid.

One issue with the pre-alpha version is that it keeps the authorizer implementation out of the scope, and expects the user to implement it based on their needs (or make all endpoints public, which is quite fine in most cases due to how the protocol is designed to have the requester pay for all gas costs). As described in the March report, the authorizer is where the first-party oracle business model is implemented. This is why we refrained from locking in the authorizer design prematurely, yet we are now at a position where we have a clear understanding.

This month, we implemented authorizers, finalized RRP and are currently undergoing an audit by Certik. Once we pass the audit, we will publish an in-depth article about what has changed in the protocol since pre-alpha, but in short, it’s a much more streamlined user flow + ready-made authorizers.

As a final note, we would like to showcase the Airnode RRP Explorer, a tool to search for and decode requests and responses submitted through the Airnode protocol (specifically, the pre-alpha version, though updating it should be simple). To explain roughly, for the same reason that a regular Ethereum user needs to refer to Etherscan frequently (and developers a lot more), it’s not possible to imagine widespread adoption of the Airnode protocol without this kind of a tool being available. Therefore, it’s difficult to overstate the importance of this tool, and we’re excited to see how its development will continue.

Wolf of Chazes, shot by M. François Antoine de Beauterne in 1765, displayed at the court of Louis XV.

API3 Core Technical Team Report, October 2021

We are happy to announce that we have released Airnode v0.2! If you have been following our reports, you should know that we have focused our efforts on Airnode since the DAO launch, but we didn’t share further details. The development of Airnode is the most critical aspect of API3 operations, as almost the entirety of our efforts — technical and non-technical — depend on the existence of this oracle node that will enable first-party oracles at scale. In this sense, we’re careful about treating Airnode development with the respect it deserves, and a part of this is preventing it from turning into an object of hype.

Airnode v0.2 is a silent release, meaning that it is public, but not necessarily publicized (this report doesn’t count because we have to tell you about it for governance purposes). What is more significant than the release itself is that we have a release management process in place, which we have tested now with great success by making this release. This will allow us to do rolling releases from here, meaning Airnode being improved iteratively (not that the pre-alpha version wasn’t good, but it had to be frozen and consequently couldn’t be improved further) and being able to address business problems by implementing new features.

I have recently posted an article on what has changed in the Airnode request–response protocol since pre-alpha. Note that a protocol update is only a subset of a node update, and this node update is especially extensive. I’ll try to list some high level items here:

- We switched from using Serverless Framework and Terraform to pure Terraform for managing our deployments. This will be transparent to the user, yet it improved the stability, future-proofness and cloud provider-agnosticism of Airnode significantly.

- We implemented an HTTP endpoint that can be used to make test API calls and an outbound heartbeat function for monitoring, primarily for integration platforms such as ChainAPI.

- The OIS validator is now tested with all of the integrations that the API integrations team has done and is automatically being run as a step by the deployer. This means that if a user attempts to deploy an Airnode with invalid configuration files, the deployer will detect this and throw an error.

- In addition to implementing the authorizer contracts (see the protocol update post mentioned before), we implemented an interface in the airnode-admin package that Airnode operators can use to personally manage the whitelisting statuses of their clients. Note that this is an alternative to the Airnode management UI.

- Configuration files that specify integrations and node operator secrets are reworked for better separation and flexibility.

- Airnode is implemented as a monorepo, which means it is composed of many packages, some of which are useful for developers and node operators in standalone form. In addition to publishing the airnode-deployer Docker image (this is what you use to deploy an Airnode as a serverless function) and the airnode-client Docker image (this is what you use if you want to run Airnode in a container, locally or otherwise), we now also publish all packages of the monorepo as npm packages. This allows you to run the following command on your terminal

(to derive the extended public key of your Airnode by providing your mnemonic) or import @api3/airnode-protocol in your contract to inherit RrpRequester to make Airnode requests.npx @api3/airnode-admin derive-airnode-xpub --airnode-mnemonic “nature about salad…” - This is no longer as impressive. The Airnode implementation in the airnode-deployer and airnode-client Docker images are now pre-built, which means they are quarter the size of the pre-alpha counterparts and they download and run much faster, providing a better user experience.

- The pre-alpha documentation was frozen along with the implementation. Since then, we have been working on documentation versioning and v0.2 contents.

- The example project of the pre-alpha version lives in a separate repo, which makes it very difficult to keep up to date. We migrated our examples to the Airnode monorepo at https://github.com/api3dao/airnode/tree/master/packages/airnode-examples and integrated them to our CI tests to make sure that they don’t fall out of date (nothing sours a developer away faster than the example projects being broken). We also extended the examples to not require a public chain or a cloud provider account to reduce developer on-boarding friction.

The one thing that we didn’t do is a complete refactor of the node implementation, as that was already very solid in the pre-alpha version. So even though this is quite a major release, we expect the node to keep behaving stably. I’m sure there are bits that I forgot to mention, but this is where you come in anyway. You’re more than welcome to poke around and give us feedback by creating Github issues or swinging by our Discord.

All in all, this was a satisfying end to our August–October grant cycle. This was made possible by a team of great people who take pride in their work. On behalf of myself and the entire API3 community, I thank the members of the core technical team for their great effort. We’re only getting started though, so stay tuned.

Francisco de Zurbarán, Autoportrait, 1650.

API3 Core Technical Team Report, November 2021

We have been working on Airnode v0.3 during November and you can expect it to be released in the following days. We had two main objectives for this release:

- Allow the requester to specify an arbitrary single-level object to be returned (instead of a single point of data)

- Support Google Cloud Platform (GCP) for the serverless configuration

We managed to hit these objectives and a few more, which we’ll discuss in this post.

Returning objects

Say you want an oracle to call https://www.crypto-api.com/markets?from=ETH&to=USD and return you the price of ETH-USD and the total volume of the market. There are commonly two options:

- Make an oracle request for the price and the volume separately

- Implement a specialized, hard-coded adapter that will give the price and the volume fused together

(1) is bad because it’s unwieldy and expensive. (2) is bad because it requires this adapter to be developed on a case-by-case basis. Our goal is to develop an oracle protocol that supports seamless and flexible API integrations to smart contracts, which includes protocolizing how the API response needs to be processed. Allowing requests to be specified to return encoded objects instead of a single point of data is the first step of these efforts.

Starting from v0.3, the requesters can specify that they want Airnode to make a specific API call and use the returned JSON to build any single-level object, which will be returned to the chain by the Airnode and decoded there. This is much more flexible (in that the requester is not limited to the existing adapters) and scalable (in that you don’t need someone to pre-build and deploy purpose-specific adapters) compared to existing methods, and should be enough by itself to serve the majority of the potential oracle use-cases. However, we will be continuing our efforts in extending the Airnode protocol for better flexibility in this regard in the following versions.

GCP support

We have mentioned that we switched to a pure-Terraform configuration in v0.2 that will allow us to port Airnode to various cloud providers more easily, and in a more maintainable and secure way. This was followed through by extending the cloud provider options for the serverless configuration from the existing AWS to GCP.

Note that this is not necessarily a matter of either/or. The Airnode protocol is uniquely designed to be able to be served by multiple independent deployments for optimal uptime. This means an Airnode operator can use serverless configurations deployed on AWS and GCP simultaneously, and in fact, this is the recommended setup. In this way, providing GCP (or rather, a second cloud provider in addition to AWS) support fulfills a critical step in allowing unbreakable Airnodes to be deployed. We are planning to extend support to Azure and potentially other cloud providers in the future.

Stress tests and adjustments

In a first-party oracle solution, an API is served only by a single oracle, operated by the API provider. This means there is no node-level redundancy, resulting in maximal cost-efficiency. However, this also means that we can’t depend on node-level redundancy for availability and have to build a truly highly available node (even if it’s not actively monitored and maintained by dedicated personnel). Recall that the Airnode protocol allows each requester to specify a different wallet to fulfill their requests, which enables oracles with infinite individual bandwidth. However, the node implementation should also be designed and built in a way to support this, which is one of the reasons why the recommended configuration is serverless.

To fulfill our vision of scaling up to fulfill any and all API connectivity needs, Airnode must be accessible in a permissionless, programmatic way. This is a unique goal in the oracle space, and poses a specific challenge: We can’t depend on access restriction as a security measure, i.e., anyone will be able to spam an Airnode on-chain after programmatically buying access, and Airnode should be built in a way that this is inconsequential. The current serverless configuration gives us the tools to achieve this, yet this is still a lofty goal that needs to be attended to specifically.

While developing this version, we conducted stress tests in various environments to assess the limits of the current implementation. Based on our findings, we made adjustments to our serverless configuration that greatly improved the resiliency of the node to the degree that it’s already better than the available alternatives. However, we have a few further goals around this matter that we want to fulfill in the upcoming versions:

- Improve the node architecture so that it can scale limitlessly (in practice, up to the limit imposed by the cloud provider on an account-basis)

- Allow the user to quantify the capacity they want to allocate to specific chains, and this capacity to be guaranteed by the node

- Come up with an optimal configuration that we can recommend to serve use-cases that will secure an arbitrarily large amount of value

In addition to the above, we implemented an image that wraps the airnode-admin CLI, mainly to allow the API providers to generate a mnemonic for their Airnodes. Features described above are demonstrated with example projects under the airnode-examples package, and our documentations are extended with these features. We recently planned the upcoming v0.4 according to the strategic needs of the DAO, and will start work on it soon.

If you haven’t noticed, we updated our whitepaper to v1.0.2. This was a long way coming, as the described staking reward mechanism was outdated, and despite v1.0.1 linked to a post that went through the planned updates, some readers overlooked that and were confused. This update worked the contents of that post into the whitepaper, while removing the outdated content such as the scheduled staking reward graph. What was particularly rewarding was realizing that we had already delivered a lot of the things that we said we would deliver in the whitepaper, and the respective statements needed to be rephrased to reflect that. Changing “we will do”s to “we did”s is the best kind of update a whitepaper can get, and we’re looking forward to doing more of this in the following months.

Pieter Brueghel the Elder, The Hunters in the Snow (winter), 1565.

API3 Core Technical Team Report, December 2021

As promised in the November report, we released Airnode v0.3 on December 7th. We started working on v0.4, which is largely focused on revising how the requests are handled to:

- Enable unlimited scaling (to the point that the cloud provider allows) and chain-specific provisioning of concurrency, which will allow a single node deployment to serve the needs of all API providers, regardless of the number of chains being served or the expected volume. This means Airnode will be deployed the same way — without requiring additional orchestration — if it’s only for testing, serving a single production use-case or serving many production use-cases across many blockchains.

- Allow requesters to specify the API provider to sign the response, yet a separate reporter to submit the fulfillment transaction (see the second figure here, or Section 4.1.1 from our whitepaper). This potentially solves some significant business problems and makes new use-cases possible. We will elaborate on this feature as it comes closer to fruition.

In the meantime, we are also working on the v1.x protocol contracts, which include PSP (publish–subscribe protocol), RRP+PSP Beacons, dAPIs, token lock-up and payment functionality for automating access to API3 services.

After Certik, Sigma Prime has completed a second audit of the v0.x protocol contracts. The audit report can be accessed through our monorepo. No vulnerabilities have been uncovered in the protocol, and releases v0.2 and v0.3 are once again confirmed to be safe to use. Nevertheless, it is planned for the v0.4 release to use a new iteration of the contracts where the suggestions from the audit report are implemented.

This month, we published an article introducing Beacons. A Beacon is a data feed that is powered by a single first-party oracle. It can either be used by itself, or multiple of them can be combined to form dAPIs. This relates to the core technical team in that the Beacons and the Beacon-based dAPI architecture is our design. In other words, similar to what has happened with the authoritative DAO, the core technical team has claimed dAPI (and Beacon) development and operations, despite them not being a part of our responsibilities according to our most recent proposal.

The core technical team is responsible for building a scalable infrastructure, which increases the leverage of the ecosystem participants. Developing ecosystems commonly don’t have participants that can utilize the available tools to their full potential. A common pitfall here is for the core technical team to go in and show ’em how it’s done. This is done by choosing a few, large enough clients and bending over backwards for them. This will result in some short-term success and mutual backslapping, but it ends up poisoning the project because:

- The core technical team stops thinking about the untapped potential and only about the needs of the few clients. This is fine if the goal is to become a service provider, but not if it is to build a protocol and an ecosystem on it — imagine Ethereum developed with specific dApps in mind. This stunts the development of the protocol, and the general ecosystem participants will leave for greener pastures because they are not being cared for.

- Once the vision for an ecosystem is lost, the future of the project is now hinged on keeping the existing clients, which means continually building what is asked for rather than the grand vision, so it’s a downward spiral from here.

These observations delayed us from participating in dAPI development, noting that building Beacons or dAPIs wouldn’t have been enough, they would also need to be actively operated, which would take up a considerable amount of time that we could spend developing the node and the protocol (which can be used for much more than Beacons and dAPIs). That being said, we are indeed the best people available to operate these services reliably. We have a two-fold solution to this:

- Build towards a level of readiness where we would be comfortable with allocating some of our resources towards operations. This has been partly achieved by the recent Airnode releases, which is why we have stepped in now and not earlier.

- Continue the core development in a way to feasibly offload the operations in the future, which would be made possible by data feed operation to be more of a set-and-forget matter, most importantly through the implementation of PSP.

In summary, the core technical team has taken over development and technical operation of Beacons, and dAPIs by implication. This work will be carefully balanced with infrastructure development to uphold the long-term interests of API3. From a general point of view, the core technical team is on track with building the deliverables from the whitepaper in the stated three years (of which one has passed) without requiring outside help (assuming that our proposals pass). We are still carefully optimistic about external dAPI operation teams (meaning “external to the core technical team”), and will be designing and documenting the Beacon and dAPI operation process in a way to make that feasible.

Thanks for reading our report, Happy Holidays and best wishes for the New Year for the API3 community!

A Composite Elephant And Rider (Mughal), 1600–1640.

API3 Core Technical Team Report, January 2022

As explained in the December report, the core technical team carefully started expanding its efforts towards building products that utilize Airnode, in addition to building Airnode itself. Beacons are the first of such oracle primitives, yet “building Beacons” doesn’t say much because a Beacon is an abstract product, and is brought into reality by building and combining many pieces of technology. What unites these is that they all depend on or interact with Airnode or its protocol, and this recent work has shown us that Airnode is a really solid foundation to build things on thanks to its design and implementation.

We continued our work on Airnode v0.4 this month, and are expecting to release it in a week. We didn’t rush the work on v0.4 — as the current v0.3.1 is provably capable of powering Beacons — and aimed to achieve all of our wants instead. The scope for v0.5 is agreed on; the features to be implemented will mostly be focused on improving Beacon performance further in terms of cost-efficiency.

Here’s something that you may not have heard about before: Airkeeper. We have been criticizing oracle nodes for not being designed to optimize the user requirements. The truth is, the same requirements apply for keeper nodes and bridges, people want them to be performant and set-and-forget. Furthermore, adding the ability to make API calls to such nodes would supercharge them, and in our case, these would be first-party API calls that can be depended on for executing transactions of financial nature. This all is obviously exciting, but our reason for building Airkeeper now is more practical: We want Beacons to be operable solely through the request–response protocol (RRP).

At the moment, Airkeeper is specifically designed to “keep” a Beacon alive based on an arbitrary deviation threshold. It is similar to Airnode in terms of operational requirements, meaning that it can easily be operated by an API provider. Furthermore, one can operate as many of them as they want in a third-party way to create redundancy, and add an incentive mechanism to increase their robustness. Note that the Airnode protocol also doesn’t include an incentive mechanism. From the whitepaper:

…Finally, let us briefly mention how the Airnode protocol approaches monetization. It is common for a project-specific token to be worked into the core of the protocol in an attempt to ensure that the said token is needed. However, this induces a significant gas price overhead, severely restricts alternative monetization options and creates overall friction. For these reasons, the Airnode protocol purposefully avoids using such a token. Instead…

Here, the motivation is the same. The keeper protocol that isn’t built around a token will eat the others.

I like describing the publish–subscribe protocol (PSP) as an oracle and a keeper rolled into one. Therefore, Airkeeper could be seen as a less-protocolized, proto-PSP node. In other words, Airnode that supports PSP will be able to do everything that the Airkeeper is currently doing, including triggering first-party RRP requests when necessary. We are getting into the weeds a bit here, but the point is, the long-term plan for Airnode is for it to be a first-party, cross-chain oracle and keeper node. This new functionality is developed in components independent from Airnode (such as Airkeeper) to avoid introducing issues to Airnode, which is desired to be very stable. Once this functionality reaches maturity, e.g., is able to support full-PSP, it will be merged to Airnode.

Another key component of Beacons are their operational side. In a decentralized solution, this means keeping a transparent source of truth, which can then be used to top up the sponsor wallets periodically, visualize the metrics and monitor them to detect anomalies. The key thing we have considered here was to not reuse any Airnode/Airkeeper implementation. Although we have a strong toolbox we can draw from, we are verifying the integrity of this very toolbox, which makes it off-limits for this kind of work.

Finally, one builds a product for it to be used. Any developer knows the importance of a good SDK. However, what that means completely depends on what it’s for. The Airnode protocol is very powerful, which is a requirement for some builders such as OBP. There are also some builders that just need the price of X, and they can’t be bothered with reading through our Airnode documentation, however much pedagogical it is. What we are aiming with the Beacons is to abstract away the entire Airnode implementation, so that the user simply needs to interact with a contract that they read a value from. But even while doing so, we are benefiting from the Airnode protocol design, in that a first-party Airnode has the same address on all chains, and a specific request to these Airnodes are all identified by the same ID on all chains, which means we don’t need to duplicate documentation across chains — one thing that works on one chain also works on another in a verifiably identical way.

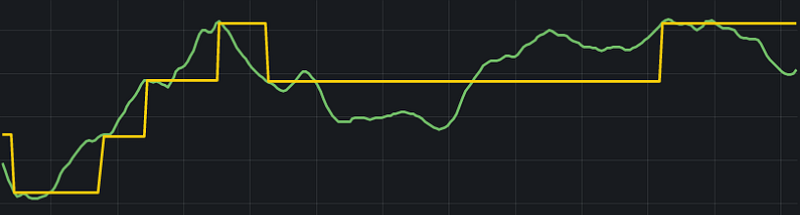

And this is the end product:

A first-party Airnode–Airkeeper pair, operating a Beacon (yellow) that tracks the API response (green) at the deviation threshold they were configured for. We will be making a variety of these Beacons available at ETHDenver, of which API3 is the official DeFi track sponsor. We are big proponents of user-centered design, so we will use the feedback gathered from Web3 developers and projects to hone our design.

As a final note, I find it ridiculous how swift and skillfully everything around Beacons is tied together, all the while not disrupting the work on Airnode. For this, I feel the need to congratulate the core technical team.

William Holman Hunt, The Miracle of the Holy Fire, 1892–1899.

API3 Core Technical Team Report, February 2022

We were at ETHDenver and it was glorious! We used this opportunity to showcase Amberdata Beacons, which were received with a lot of enthusiasm and approval. It was encouraging to see that potential users found first-party oracles to make a lot of sense, and gaining widespread adoption is only a matter of time and effort put into scaling. All this was made possible by the core technical team deciding to expand its efforts into building live data feeds in mid-December, and this quickly coming into fruition with 20 RRP-based Beacons each on 4 chains in early-February. This demonstrates how agile one can be when they use Airnode to put together higher level oracle services, and you can expect us to do more of this.

As discussed in the December report, it’s important to balance product development and protocol development. Products are what the market wants and needs, yet a powerful Airnode is what makes it easy to build impactful products. Add good documentation and guides on top of this, and our users will be building the products for us. Based on this reasoning, we did not let Beacon development stop Airnode development, and released Airnode v0.4 early in the month. This release was mostly about improving RRP performance and implementing all of the breaking changes anticipated in v0 early on. Work on v0.5 has started, and it’s expected to be released in March.

Feel free to refer to the links above for more details about what we have built this month, and let me quickly plug a talk I gave at ETHDenver on our approach to designing technical solutions, which places Beacons in a larger framework. You can also take a look at the Beacon workshop or Ashar’s RRP demo. Cheers for reading!

Illumination from Pontifical of Renaud de Bar, 1303–1316.

API3 Core Technical Team Report, March 2022

We have released Airnode v0.5, which implements all remaining requirements for Beacons. We’re continuing having our contracts audited in a rolling fashion, and a new preliminary report verifies (for the fourth time) that the v0 protocol contracts do not contain any unintended vulnerabilities. Accordingly, you can expect a v0.6 release in the coming April that rolls out the v0 protocol on more EVM chains. Furthermore, work has started on porting Beacons and dAPIs to some non-EVM chains. Details about the scope and budgeting of this work will be shared in the coming months.

After the v0.5 release, we were able to focus more on Beacon-specific development. The contract that implements Beacons and dAPIs is designed to be compatible with all Airnode protocols (RRP, PSP, relayed RRP, relayed PSP, API-signed data). In contrast, the ETHDenver Beacons are being operated only using RRP — which has proven itself to be quite reliable on its own. We will operate our production Beacons using PSP for cost-efficiency and improved responsiveness. On top of this, we will utilize the API-signed data served by the HTTP endpoint implemented in Airnode v0.5. This scheme is superior to even PSP in terms of cost-efficiency and responsiveness, but more importantly, it allows the core technical team to respond to incidents without disturbing the trust-minimized, first-party nature of the service. Using PSP and API-signed data simultaneously allows us to enjoy the advantages of both without any degradation in trustlessness because the data will always be cryptographically signed by the API provider.

Finally, for those who have missed it, we have pushed an updated DAO dashboard version to production. With that, let me link you to an article where we do a deep dive into dAPIs.

Katsushika Hokusai, Remarkable Views of Bridges in Various Provinces: Old View of the Boat-bridge at Sano in Kōzuke Province, 1833.

API3 Core Technical Team Report, April 2022

We have finalized our audit with Trail of Bits that covers the Airnode protocol v1 — RRP, PSP, relayed-RRP, relayed-PSP and signed data-based usage. All of these protocols are used in our dAPI server contract, and will go into production soon to power Beacons. In addition to the protocol contracts, the Airnode monetization contracts are audited as well, which means they can be used as is (for example, if you want to set up an Airnode that only responds if the requester has paid a subscription fee in stablecoins) or as a solid foundation to build your custom logic on. Our work at the protocol side is done for the foreseeable future. Now, the node needs to catch up to implement these protocols fully.

A lot of the v0 and v1 contracts overlap, which means the audit above also applies to protocol v0. With this added assurance, we have released Airnode v0.6, where we have rolled out official Airnode integrations to the following smart contract platforms and their testnets: Ethereum, Arbitrum, Avalanche, BNB Chain, Fantom, Gnosis Chain, Metis, Milkomeda C1 (Cardano), Moonbeam, Moonriver, Optimism, Polygon and RSK. Projects building on these chains can expect to be able to enjoy API3 services earlier, yet we’re planning to address the rest of our backlog rapidly.

One of the biggest technical news of this month was the announcement of the ChainAPI beta access. After a lot of development and closed testing, ChainAPI is finally ready to meet its first users. At this stage, ChainAPI helps the user create and manage API–Airnode integrations, and deploy Airnodes that will serve these integrations. If you’re planning to run an Airnode, I heavily recommended you to apply to beta access.

I don’t want to dilute these three big news with filler, so I’ll keep this one short. Let me end by plugging an article I’ve published recently: Commercial building blocks, which is essentially a personal time-investment thesis, as in why I find API3 worth working on above everything else.

Sophie Green, The March, 2021.

API3 Core Technical Team Report, May 2022

We did something uncommon this month, and revealed QRNG without any prior mention. A first-party oracle service being provided on 13 mainnets and 4 testnets, and this being launched on all chains simultaneously is a first, and foreshadows what Airnode will do in the future. There are two main reasons why we were able to do this:

- The single Airnode that ANU Quantum Numbers deployed is serving all of these chains simultaneously, in an isolated way. This allows us to provide the same service on more chains with no additional cost or maintenance requirement.

- The Airnode protocol allows the requester to cover all gas costs, which is one of the things that makes it set-and-forget. This is especially beneficial if the chain has limited infrastructure and getting a hold of some native currency that is used for gas costs is relatively difficult.

As you can imagine, these advantages translate to all use-cases when it comes to serving under-served chains, and not only QRNG.

One important benefit of working on QRNG was that we had to do some R&D to make it possible, which provided important insights about API response caching, stateful authentication methods such as OAuth2 and a way to implement them with a stateless Airnode, without having to resort to custom builds or external dependencies. In this regard, we got much more out of QRNG than the time that we put into developing it.

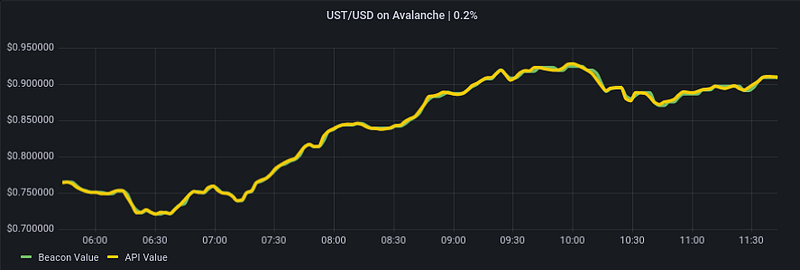

We have continued focusing the majority of our attention on building Beacons and dAPIs. As you may know, we have been operating 25 Beacons each on 4 testnets since January. We also started operating Beacons on 4 mainnets since the end of April, with over 30,000 Beacon updates! One of these Beacons was UST/USD on Avalanche, which has proven to be the perfect stress test (see below). Overall, we’re happy to say that our Beacons have performed excellently during these trying market and chain conditions, and we can’t wait to allow projects to start using them

Airnode gazing into the abyss.

Illumination from Ein wahres Probiertes und Pracktisches geschriebenes Feuerbuch, 1607.

API3 Core Technical Team Report, June 2022

We have released Airnode v0.7, which was a big update that both reworked parts of Airnode and implemented new features that we’re using heavily in new integrations. We have started working on v0.8 and an important part of this release is that its development will be tracked publicly. This has two implications: (1) The users will know what to expect from v0.8 before it’s released, (2) External contributions will be more feasible, as one can pick up a task from the backlog and work on it.

Another important development is that the OIS (Oracle Integration Specifications) implementation in the Airnode monorepo is migrated to its own repo (and the package name is updated from @api3/airnode-ois to @api3/ois). We have separated the OIS docs from Airnode docs before and this sets OIS further apart from Airnode as an oracle integration standard, rather than the integration specification format of a specific oracle node implementation. The current version of OIS was specified almost 2 years ago and what is expected from an oracle has changed since then. Specifically, in addition to delivering API responses, oracles are required to read on-chain, archival and logs data from arbitrary chains, and combine the resulting data in arbitrary ways. By supporting this functionality in coming versions of OIS, we will enable first-party keepers and bridges, in addition to more novel services.

ChainAPI being launched this week is probably important news for people reading our reports. For people who have already used an Airnode following our docs, the most interesting feature that ChainAPI provides is being able to check the status of the Airnode you have deployed. It is generally recommended for the API providers to have redundant deployments. These should at least be on separate availability zones, but the gold standard is a multi-cloud deployment (for example Amberdata has an AWS and GCP deployment at the moment). Furthermore, we practice A/B updates, which means an old deployment isn’t taken down before the new one is confirmed. Therefore, to provide a very reliable service, an API provider will sometimes have four deployments at a time on multiple platforms, which is obviously difficult to juggle. Airnodes deployed using ChainAPI are configured to send heartbeats to ChainAPI (a fully-outbound, trustless process), which allows ChainAPI to display what deployments you have online at a time, on which cloud providers and regions, etc. This functionality and everything else that ChainAPI provides is completely trustless (so that it doesn’t degrade the security guarantees that first-partyness provides) and for free.

As a final note, we’re finally there with our dAPIs. We have been running them on various mainnets for a long time now with great success. We have revamped our data feed docs to be more dAPI-focused, including the dAPI Browser embedded in our docs that displays on-chain data in real-time. While continuing the development of Beacon set-based dAPIs and the coverage product (and by the way, we released a whitepaper patch related to that), our next step is to start getting our first dAPIs in use.

Illumination from Feuer Buech, 1584.

API3 Core Technical Team Report, July 2022

We’ve been running testnet Beacons since January and mainnet Beacons since the end of April. We encountered some exciting chain and market conditions during this period, yet Airnode performed as intended without any surprises. As a result, we finally decided to ship our data feeds. Note that we not only provide our data feeds as Beacons, but also dAPIs. Currently, the dAPIs are pointed to individual Beacons by a 3 of 6 multisig controlled by members of the core technical team (more documentation about this is coming). In the near future, we will set up Beacon sets and point the dAPIs to them where appropriate.

The API3 whitepaper proposes that service level guarantees in the form of an insurance-like product is the ideal security mechanism for oracles (and that this wouldn’t be optimally cost-efficient with non-first-party oracles). Even though we haven’t released this product yet (and the data feeds will be free to use until then), the fact that we’re planning to do so in the near future caused us to consider it at every step of designing the data feeds and deciding if they are ready to be used yet. “Would we want to insure this at scale?” was a question that we frequently asked ourselves and made decisions accordingly, which validates the idea that oracle services should not only come with coverage, but that should ideally be by the provider of that service — in this case, API3.

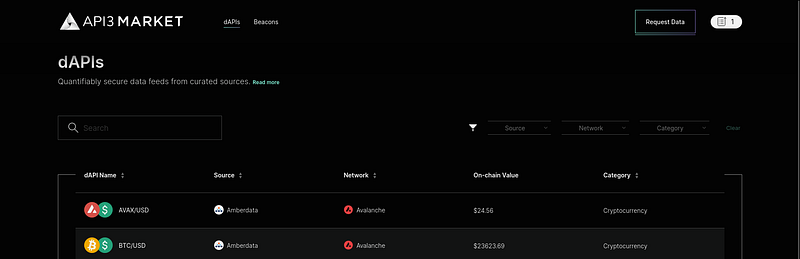

The second major product we launched this month is API3 Market. We had been working on this with Protofire for the last three months, with significant contribution from our team due to the importance of the project. The result is very slick and pretty, so check it out if you haven’t already. The core technical team has fully taken it over from here and have already started improving it based on the user feedback.

The main difference between API3 Market and data feed pages built by other oracle projects is obvious from the name: API3 Market is an interactive platform where you would go to buy access and coverage for oracle services (rather than the typical one that is designed to prove token holders that the project has working data feeds). This is directly related to our vision for API monetization on Web3, and you can consider API3 Market to be one of the foundations that we will build this on.

Simone Martini, Miracle of the Child Falling From the Balcony, 1324.

API3 Core Technical Team Report, August 2022

In preparation for our insurance-like service coverage product, we scheduled a fourth audit of the DAO and the staking pool to be done this month. Unlike others, the scope of this audit included the front-end, and there were two critical findings in the module we specifically requested extra attention to. These findings are now patched in production, and we confirmed that they haven’t been exploited in the past. There were no findings relating to contract code that warranted a response. We will publish the audit report once it’s finalized, which you can get detailed information from.

At the data feed operation side, we were set back by Airnode issues around GCP deployments. We’ll rework the related components to fix these problems and potentially implement features that weren’t possible before.

With this 3 month-cycle, the core technical team kicked off the monetization effort. This primarily relates to premiums paid for service coverage policies being translated into API3 tokens being bought back from the market and burned, and API providers being compensated at scale. We’re planning the end result to be an end-to-end flow that starts at the API3 Market and ends with API3 token scarcity and happy API providers.

Katsushika Hokusai, The Wave (outpainted), 1831.

API3 Core Technical Team Report, September 2022

We released Airnode v0.8 this month, which introduces a few useful features that we’ve been using in customized versions for a while. We’re currently working on v0.9, and have already planned out v0.10 and v0.11. As a reminder, we track our work on a public board (even though we can’t make all issues publicly visible), and have started representing more of our work there by fully migrating from our Jira boards.